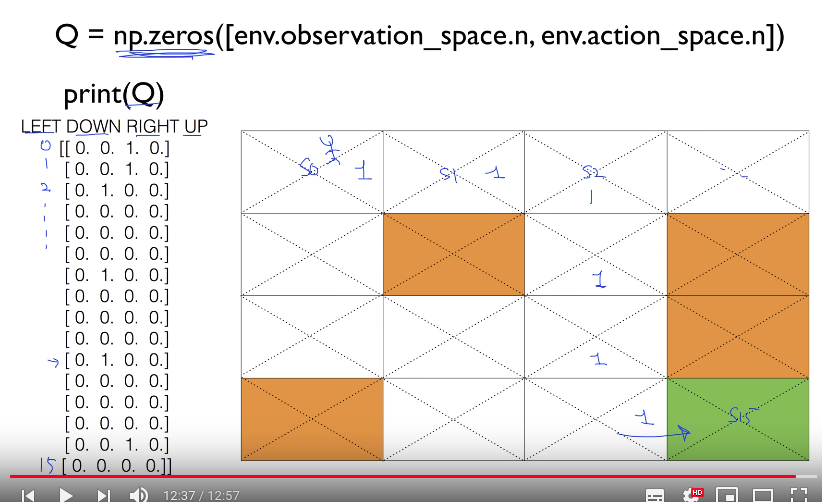

# ================================================================================ import gym import numpy as np import matplotlib.pyplot as plt from gym.envs.registration import register import random as pr # ================================================================================ # Random argmax def rargmax(vector): m=np.amax(vector) indices=np.nonzero(vector==m)[0] returnpr.choice(indices) # ================================================================================ # @ Env options register( id='FrozenLake-v3', entry_point='gym.envs.toy_text:FrozenLakeEnv', kwargs={'map_name':'4x4','is_slippery': False} ) # ================================================================================ # @ Create env env=gym.make('FrozenLake-v3') # ================================================================================ # c Q: (16,4) 2D array Q=np.zeros([env.observation_space.n,env.action_space.n]) # c dis: discount factor for future rewards dis=0.99 # c num_episodes: 2000 episodes num_episodes=2000 # ================================================================================ # c rList: saves "total rewards" and "steps" per each episode rList=[] # ================================================================================ for i in range(num_episodes): # c state: reset env and get current 1st state state=env.reset() rAll=0 # done=True: end of game done=False # ================================================================================ while not done: # c action: chosen action using adding noise to Q values action=np.argmax(Q[state,:]+np.random.randn(1,env.action_space.n)/(i+1)) # ================================================================================ # @ Execute action new_state,reward,done,_=env.step(action) # ================================================================================ # Update Q function using discounted factors to future rewards Q[state,action]=reward+dis*np.max(Q[new_state,:]) # ================================================================================ # @ Accumulate reward rAll+=reward # ================================================================================ # c state: new observed state into current state state=new_state # ================================================================================ # After end of single episode, you append accumulated reward into rList rList.append(rAll) # ================================================================================ print("Success rate: "+str(sum(rList)/num_episodes)) print("Final Q-Table Values") print("LEFT DOWN RIGHT UP") print(Q) plt.bar(range(len(rList)),rList,color="blue") plt.show() # https://raw.githubusercontent.com/youngminpark2559/pracrl/master/shkim-rl/pic/2019_04_22_11:29:11.png # Note that you can see various float numbers as well as 1.0 in Q values # because you use discounted factors on future rewards